Competitive benchmarking is one of the core reasons brands invest in an AEO platform. You want to know how often you’re mentioned versus competitors, where the gaps are, and which answer engines are pulling ahead.

But the depth of benchmarking varies wildly across tools. Many platforms only let you benchmark across two or three AI engines on their base plans. Others offer limited filtering, shallow citation analysis, and no view into how competitors are actually winning the citations they win.

This article compares five AEO platforms on how well they handle competitor benchmarking across multiple answer engines. We evaluate each tool on four dimensions: breadth of AI platform coverage, depth of competitive analysis (presence, citations, share of voice, sentiment, position), filtering and segmentation capabilities, and the actionability of benchmarking insights.

Assessments are based on hands-on product testing, public product documentation, G2 reviews, published customer case studies, and public pricing.

| Tool | Best for | Starting price | AI platforms tracked | Competitor slots (entry tier) | Standout benchmarking capability |

| Scrunch | Enterprise use | $250/mo | 9 (all plan levels include 4; Enterprise includes all 9) | 5 (Core), custom (Enterprise) | Three-layer benchmarking: aggregate share of voice, prompt-level competitor presence, and citation-level analysis with Influence Score |

| Profound | Prompt-level demand and conversation research | Custom (Starter ~$99/mo) | 9+ (full coverage Enterprise only) | Varies by plan | Conversation Explorer surfaces competitive context from millions of AI conversations |

| Peec AI | Affordable competitive monitoring | €85/mo (~$95) | 3 included per plan (add-ons available) | Auto-detected from responses | Side-by-side visibility and position scoring with auto-detected competitor suggestions |

| Otterly | Solo marketers and small teams | $29/mo | 6 (2 require paid add-ons) | Included in monitoring | Share of AI Voice metric showing citation share versus competitors |

| AthenaHQ | Startups running their first AEO program | $95/mo (first month, then ~$295/mo) | 8 (all plans) | Included in monitoring | Ask Athena agentic copilot for plain-language competitive queries |

Scrunch: Best for Enterprise Use

Scrunch is a full-stack AI visibility platform with broad AI platform coverage across all plan levels. It tracks 9 platforms: ChatGPT, Claude, Perplexity, Gemini, Google AI Mode, Google AI Overviews, Microsoft Copilot, Meta AI, and Grok. It’s trusted by enterprise brands like Lenovo, Akamai, and ADP.

Scrunch’s competitive benchmarking across multiple answer engines works across three layers, and this depth is what separates it from the rest of the list.

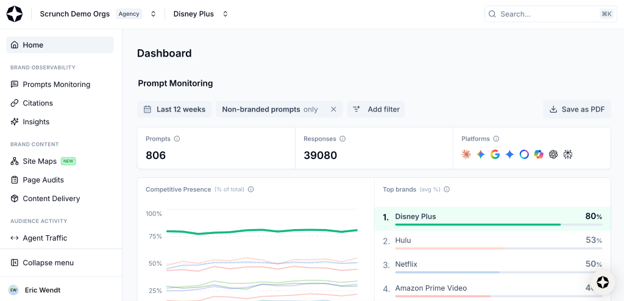

Aggregate benchmarking (Home tab). The Competitive Presence panel shows a side-by-side comparison of your brand’s presence versus competitors across all configured AI platforms over a selected time period. The Top Brands panel ranks every tracked brand by average presence. This is your executive-level view: at a glance, you can see if you’re gaining or losing ground.

Scrunch defines brand presence as how often a brand is explicitly mentioned in the AI-generated answer itself. Mentions that appear only inside cited URLs, page titles, or source snippets don’t count unless the AI includes the brand directly in its response. This matters for benchmarking accuracy across answer engines because some tools inflate presence numbers by counting URL-level mentions.

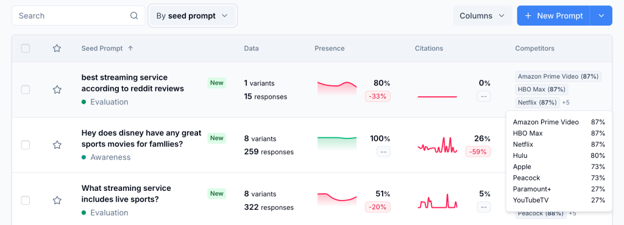

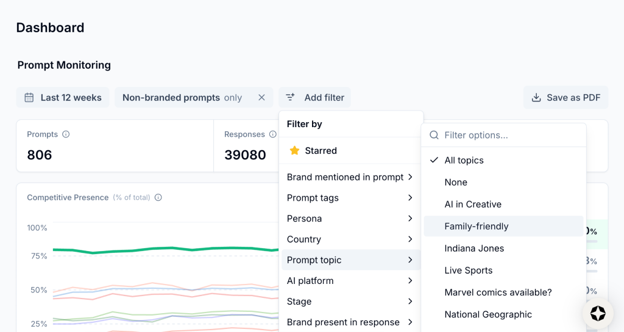

Topic and prompt-level benchmarking (Prompts Monitoring tab). This is where Scrunch gets granular. Every topic and seed prompt in the monitoring table includes a Competitors column showing competitor presence as a percentage. You can drill from topic to seed prompt to individual prompt variants (the AI-platform-specific version of a prompt) and see exactly which competitors appear in each response, how many show up, and what the full AI response looks like.

At the prompt variant level, Scrunch displays a timeline view showing presence, position, sentiment, citation status, and competitor count for each response date. If you want to know why a competitor is winning on Perplexity for a specific prompt but losing on ChatGPT for the same one, this is where you find it.

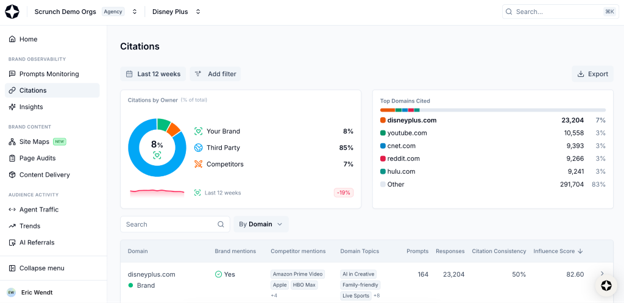

Citation-level benchmarking (Citations tab). Citations by Owner breaks down the percentage of citations belonging to your brand, competitors, or third parties. Top Domains Cited shows which websites are most often cited for your tracked prompts, stack-ranked.

For every cited domain or URL, Scrunch shows whether your brand is mentioned in the source, which competitors are mentioned, how many prompts have cited the source, citation consistency (percentage of responses citing it), and an Influence Score calculated by multiplying citation frequency by the number of unique prompts. This gives you a weighted view of which citations actually move the needle versus one-off mentions.

Filtering and segmentation. This is where enterprise teams will feel the difference. All benchmarking data can be sliced by AI platform, prompt topic, persona, funnel stage, country, branded versus non-branded prompts, tags, and citation owner.

If your VP of Marketing in EMEA needs to see competitive presence for bottom-of-funnel prompts across ChatGPT and Gemini for the “IT decision-maker” persona, Scrunch can produce that view in a few clicks.

Additional benchmarking features. Scrunch auto-detects competitor suggestions by analyzing prompt responses and AI search trend data, then flags competitive brands for review. You can add them and backfill historical data automatically.

Beyond benchmarking, Scrunch’s Agent Experience Platform (AXP) connects insight to action. Once you spot a competitive gap, you can generate an AI-optimized version of a page and serve it directly to AI agents at the CDN level, without touching the human site experience. No other tool on this list closes the loop from competitive intelligence to content delivery this way.

Scrunch’s competitive benchmarking across multiple answer engines has driven measurable results for customers. Akamai, for instance, used Scrunch to benchmark competitive presence, then deployed AXP to close gaps. Comparing AXP pages to non-AXP pages, Akamai saw 85% more total citations, 38% more unique prompts with citations, and AXP pages continue to outperform non-AXP pages by 22% on citations since launch. Brand presence for associated non-branded prompts increased by 364% (nearly 5x growth), and in ChatGPT alone, brand presence versus competitors for those prompts increased by 133%.

G2 reviewers consistently highlight competitive analysis as a standout feature as well:

“I use Scrunch AI to track my company’s ranking and share of voice across LLMs… giving us a real-time pulse on how our brand is performing against our competition on a granular level.” — Scott S. (G2 review)

“Scrunch has been a solid tool for uncovering AI insights, especially around competitor performance. I really like being able to see how and why competitors are ranking higher than a client.” — Jesse S. (G2 review)

Pricing

- Core: $250/mo (4 LLMs, 5 competitors, 125 prompts, 5 user licenses)

- Enterprise: Custom (all 9 LLMs, custom competitor count, custom prompts, dedicated account team)

- Agency Core: $500/mo (3 brand workspaces, 250 prompts, unlimited licenses)

- 7-day free trial available (credit card required, not charged until after trial ends)

Limitations

Scrunch doesn’t offer general purpose AI content generation (it’s on the 2026 product roadmap). There’s no native SEO toolkit, so teams using Scrunch alongside traditional SEO will need a separate tool. Some G2 reviewers have noted gaps in export options, though Scrunch’s enterprise API and prebuilt Looker Studio template address this for larger teams.

Profound: Best for Prompt-Level Demand and Conversation Research

Profound is one of the more established AEO platforms in the market. It tracks 9+ answer engines including ChatGPT, Perplexity, Claude, Gemini, Grok, Copilot, Meta AI, DeepSeek, and Google AI Overviews.

Profound’s competitive benchmarking across answer engines centers on two capabilities that set it apart from monitoring-only tools.

Conversation Explorer. Profound claims that this feature surfaces citations, competitive analysis, and trending keywords from millions of real user conversations with AI platforms. You can explore how those conversations reference your brand versus competitors.

Visibility score and share of voice. Profound provides a visibility score and share of voice across all tracked answer engines, giving you a quantified view of how your brand stacks up against competitors. Citation tracking includes citation authority signals that indicate which sources carry the most weight in AI responses.

Prompt volume data. Profound’s Prompt Volumes dataset (Enterprise only) claims access to 400M+ real AI conversations, providing prompt-level demand estimation. This lets you understand not just where you’re visible, but how much search volume exists behind specific prompts.

But prompt volume data across the AEO category is still maturing. Some G2 reviewers and independent reviewers have flagged inconsistencies in Profound’s prompt volume numbers, and no platform has fully solved prompt-level demand estimation yet.

Filtering and segmentation. Profound supports filtering by AI platform, region, and language, along with sentiment and keyword-level insights. However, the breadth of filtering differs significantly by plan. Starter and Growth plans are constrained to a single region and language, which limits international or multi-persona benchmarking. Full multi-region, multi-language filtering requires Enterprise.

Pricing

Profound uses custom pricing across three tiers. The key benchmarking limitation across answer engine is platform coverage by tier:

- Starter (~$99/mo): ChatGPT only, 50 prompts, 1 seat, 1 region, 1 language. No competitive benchmarking features, no data exports.

- Growth (~$399/mo): Adds Perplexity and Google AI Overviews. Competitive benchmarking and historical data included. Still no Claude, Gemini, Grok, or DeepSeek tracking, no API access.

- Enterprise (custom, reportedly $2,000+/mo): Full multi-platform coverage, Prompt Volumes access, API, SSO, dedicated onboarding.

No self-serve free trial is available.

Limitations

The biggest limitation for competitive benchmarking specifically is that multi-platform benchmarking is locked behind Enterprise pricing. On Starter, you’re benchmarking on ChatGPT alone. On Growth, you add Perplexity and Google AI Overviews but still miss Claude, Gemini, Grok, and others. If the goal is benchmarking across multiple answer engines, Profound requires a significant budget commitment to deliver on that promise.

Profound also has no content delivery layer. Once you’ve identified competitive gaps, acting on them requires separate tools or internal resources. And while Profound’s content generation agents exist on Growth (capped at 6 articles/month) and Enterprise, independent reviewers have noted that execution is still lacking in sophistication and requires significant human editing.

Peec AI: Best for Affordable Competitive Monitoring

Peec AI is an AI visibility platform focused on monitoring brand mentions, sentiment, and competitive positioning across AI search.

Peec AI’s benchmarking experience is built around simplicity across multiple answer engines. The platform auto-detects competitor brands from prompt responses and suggests them for tracking (it recommends 3 to 4 competitors per project). Once competitors are added, the customer-facing dashboard centers on a “Brands” view that compares your brand to competitors across tracked prompts with side-by-side visibility, sentiment, and position scores.

For teams that want a clean, focused competitive view without the complexity of multi-layered analytics, this is an appealing entry point. You can quickly see where you stand versus competitors without spending time configuring filters or drilling through nested tabs.

AI platform coverage. Peec AI’s base plan includes three AI models of your choice (typically ChatGPT, Claude, and Perplexity). Additional models like Gemini, Copilot, Grok, and AI Mode are available as paid add-ons, priced between €30 and €140 per month depending on your plan tier. This modular approach gives teams flexibility to pay only for the platforms they care about, but the costs add up quickly if you need broad multi-engine benchmarking.

Filtering and segmentation. Peec AI supports filtering by AI model, date range, and specific competitors. Multi-country tracking is available on the Pro plan and above (up to 3 countries per project on Pro, more on Advanced). However, filtering granularity is shallower than what enterprise tools offer. There are no funnel stage, persona, or tag-based filters on standard plans, which limits the ability to segment benchmarking data by buyer journey or audience type.

Pricing

- Starter: $95/mo. 50 prompts, 3 AI models, 1 project, unlimited users. No Looker, no API.

- Pro: $245/mo. 150 prompts, 3 AI models, 2 projects, multi-country tracking.

- Advanced: $495/mo. 350 prompts, 3 AI models, 5 projects, expanded multi-country.

- Enterprise: Unlimited prompts, all AI models, API, SSO, dedicated support.

All plans include unlimited user seats and daily tracking. A 14-day free trial is available.

Limitations

The core limitation for multi-engine competitive benchmarking is cost at scale. Three AI models are included per plan, and each additional model is a paid add-on. A team that needs coverage across six or seven platforms will pay significantly more than the base plan price suggests.

Peec AI is also monitoring-only. There’s no site auditing, no content optimization, and no content delivery capability. Once you’ve identified a competitive gap, you’ll need separate tools or internal resources to act on it.

Otterly: Best for Solo Marketers and Small Teams

Otterly is an accessible AI search monitoring platform with the lowest entry point on this list at $29/mo.

Otterly’s core benchmarking metric is Share of AI Voice, which measures the citation share you own versus competitors across tracked prompts. It covers 6 AI platforms: ChatGPT, Google AI Overviews, Perplexity, AI Mode, Gemini, and Copilot (though AI Mode and Gemini are paid add-ons on lower tiers).

Competitive benchmarking reports across multiple answer engines show how your brand compares to competitors over time, and the platform includes alerts that notify you when brand mention patterns shift. For a solo marketer or small team that needs to keep a pulse on competitive positioning without configuring complex dashboards, this is a practical setup.

Filtering and Segmentation

Otterly supports filtering by AI platform, date range, and competitors. Multi-country support is available (50+ countries on all plans). However, there’s no filtering by persona, funnel stage, or custom tags. This is unlikely to be a dealbreaker for solo marketers and small teams. But it’s a major issue for enterprise teams managing benchmarking across business units or buyer segments.

Pricing

- Lite: $29/mo. 15 prompts, unlimited brand reports, unlimited team members.

- Standard: $189/mo. 100 prompts, unlimited workspaces, Looker Studio connector.

- Premium: $489/mo. 400 prompts, priority support.

- Enterprise: 14-day free trial available, no credit card required.

AI Mode and Gemini are available as paid add-ons ranging from $9 to $149/mo depending on plan tier, which narrows the cost advantage for teams that need full multi-platform benchmarking.

Limitations

The main limitation for multi-engine benchmarking is the add-on pricing for AI Mode and Gemini. The $29/mo entry point is attractive, but once you layer on additional platforms, the effective cost rises and the gap with mid-tier competitors narrows.

Otterly is also a monitoring and audit platform. There’s no content delivery, no automated content optimization, and no content generation. Teams that identify competitive gaps through Otterly’s benchmarking will need separate tools to act on those insights.

Enterprise security features are limited as well. There’s no documented SSO, RBAC, or SOC 2 compliance, which makes Otterly a better fit for smaller teams than for organizations with strict security and access control requirements.

AthenaHQ: Best for Startups Running Their First AEO Program

AthenaHQ is an AEO/GEO platform marketed as a “command center” for AI search. It’s Y Combinator-backed and includes 8 AI platforms on all plans: ChatGPT, Perplexity, Gemini, Google AI Mode, Google AI Overviews, Claude, Copilot, and Grok.

AthenaHQ’s most distinctive benchmarking feature is Ask Athena, an agentic AI copilot that lets you ask plain-language questions about your competitive positioning. Instead of navigating dashboards, you can type something like “Why is my competitor ranking above me on ChatGPT for project management prompts?” and get a data-backed answer drawn from your account’s real-time monitoring data.

Beyond Ask Athena, the platform offers competitor share of voice comparison and competitor monitoring with impersonation tracking (flagging when AI platforms misattribute competitor claims to your brand or vice versa). The Action Center maps competitive gaps to specific on-page and off-page fixes, connecting benchmarking data to a prioritized list of things to do next.

Integrations. AthenaHQ integrates with GA4, Google Search Console, Shopify, Webflow, and Framer. Enterprise plans add Tableau, Power BI, and Looker. The Shopify integration is worth highlighting for e-commerce brands: it lets you publish AEO-optimized content and attribute revenue to AI search, connecting visibility data to actual sales.

Pricing

AthenaHQ uses a credit-based pricing model, where 1 credit equals 1 AI response analysis:

- Self-Serve: $295/mo. 3,600 credits, all 8 AI platforms, unlimited seats.

- Enterprise: API access, SSO, RBAC, advanced security, dedicated support.

A 67% discount on the first month is currently available. There’s no free trial.

Limitations

The credit-based pricing model is the main consideration for benchmarking at scale. Credits are consumed with every AI response analyzed, which means costs scale with monitoring volume in a way that can be harder to forecast than prompt-based pricing models. Teams tracking hundreds of prompts across 8 platforms will consume credits quickly, and additional credits cost $100 per 1,250.

API access, SSO, and RBAC are all locked to Enterprise, which limits the platform’s fit for organizations with strict security requirements on standard plans. And while AthenaHQ’s customer base includes recognizable names, it’s concentrated in earlier-stage and growth-stage companies.

Find a Home-Based Business to Start-Up >>> Hundreds of Business Listings.